At Black Hat 2013, scientists from Georgia Institute of Technology dissect iOS security model and present Mactans, a proof-of-concept iOS attack via chargers.

Billy Lau: Good evening, ladies and gentlemen! It’s wonderful to be here today. My name is Billy Lau. I am a research scientist from Georgia Institute of Technology. Today me and my colleagues Yeongjin Jang and Chengyu Song are going to be presenting our work entitled “Mactans: Injecting Malware into iOS Devices via Malicious Chargers”. Mactans, basically, encapsulates a small portion of our findings in a larger mobile security research that is done in Georgia Tech Information Security Center, better known as GTISC.

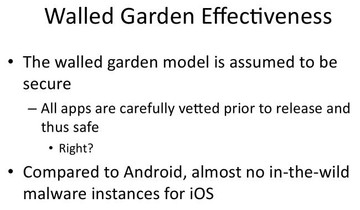

Now let’s start with the overview of iOS security. Before I go further, let me get started with a few terms that I’ll be using throughout. So, I’ll be using the term “apps” to refer to applications, programs that users install on their iPhones or other “iDevices”. I’ll be using the term “iDevices” instead of particularly iPhones or iPads because it’s a more general term. Now, in the research of iOS security we deal with a lot of assumptions, challenging these assumptions as we find them. The question today is – how secure are iOS devices? And how do we relate these assumptions to the average user, in particular activities like answering the phone, making calls or sending SMS, to very mundane activities like even charging the phone, which every user must do, we presume? And then we raise the question of other ways to challenge the security assumptions, besides jailbreaking the devices. I want you to keep these questions in mind, and hopefully you’ll find the answers to these questions by the end of our talk.

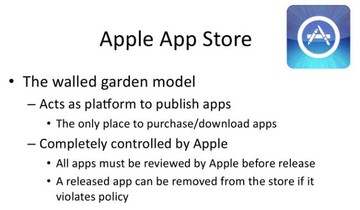

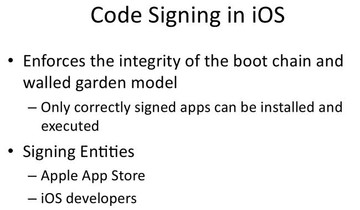

Now, who can become iOS developers? Again, during our research process what we did was we went to Apple’s development portal and requested to be a developer. We submitted our credentials, names, addresses, and credit card numbers. Then we were billed, I think it’s $99; and a few hours after that we got approved - and voila, I’m already an iOS developer! What this means, as a consequence, is that now I am able to sign any arbitrary app and then run the arbitrary app on any iDevice. So I want you to keep this point in mind as we continue to review the Apple walled garden model.

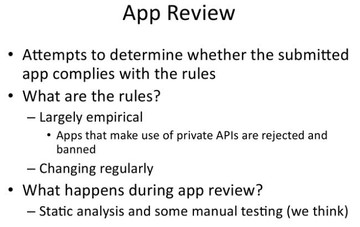

What we found out is that apps that are making use of private APIs are rejected and banned. Also, these rules are probably changing very regularly. In a more technical sense, what happens during the app review is we think Apple deploys static analysis to check whether or not these private APIs are called in your app, and also deploys some manual testing, where a real user installs the app and actually clicks through; and through a very empirical process they will then approve or reject the app.

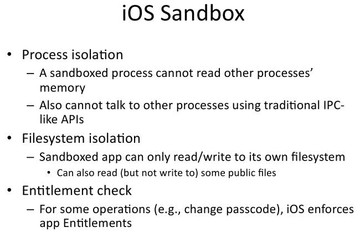

What is process isolation? It’s when, for example, a particular app A is not allowed to read any other app’s memory region. And it also cannot talk to any other process using the traditional IPC-like APIs. So, as you can see, this way the intercommunication for an app is very limited. As for filesystem isolation, the protection it brings is that if I have app A that saves a particular file onto disk, any other app installed on the iDevice cannot read or it doesn’t even know about the existence of this file. There are some caveats: although there is a certain region in the iOS filesystem that is publicly readable, it is strictly read-only. This means no modifications, there does not exist a communication channel. So, from the standpoint of the iOS Sandbox, it provides the protection where even an app that’s installed cannot easily attack another app on the system. This is in contrary to traditional platforms like PCs where such attacks can happen more easily.

There’s another interesting aspect of iOS runtime in the Sandbox is that iOS runtime performs entitlement check. What are entitlements? You can view entitlements as special privileges or permissions to assess certain sensitive resources. In this context, examples of entitlements are: if your app wants access to iCloud, if your app wants to do push notifications or change passcode. iOS strictly enforces this app entitlement during runtime. If you do not have this declared and approved, you don’t have such entitlement.

Read next part: Injecting Malware into iOS Devices via Malicious Chargers 2 - Overview of the Mactans Attack